Hopeful onlookers sometimes point to two possible escape hatches from the problems of burning fossil fuels. One escape hatch is \”peak oil\”–that is, the argument that production of fossil fuel resources is near or its peak. In this view, the impending fall in fossil fuel production might well bring higher prices and other economic hardship, but at least emissions from burning fossil fuels would drop. The other escape hatch is a large rise in cost-competitive non-carbon sources of energy, like solar and wind, but also nuclear and hydroelectric power. If these sources of energy undercut fossil fuels on price, then the economy could make a transition away from fossil fuels to an economy that used on cheaper and abundant energy from these other sources.

But there\’s yet another possibility, and it\’s the one laid out by Thomas Covert, Michael Greenstone, and Christopher R. Knittel in their article, \”Will We Ever Stop Using Fossil Fuels?\” appearing in the Winter 2016 issue of the Journal of Economic Perspectives. In this outcome, supply of fossil fuels isn\’t going to run out in the next few decades, and alternative non-carbon energy sources aren\’t going to become cost-effective for enough uses in that timeframe to substantially reduce consumption of fossil fuels, either. One might wish it was otherwise. As the authors write: \”After all, who wouldn’t prefer to consume energy on our current path and gradually switch to cleaner technologies as they become less expensive than fossil fuels? But the desirability of this outcome doesn’t assure that it will actually occur—or even that it will be possible.\” Because they believe that neither of the two escape hatches from the problems of burning fossil fuels are likely to be available, they argue that addressing issues like climate change and conventional air pollutants will require a strong policy intervention to reduce the use of fossil fuels.

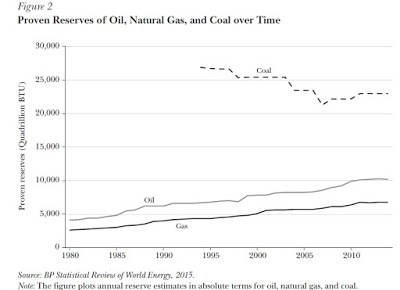

When it comes to the supply of fossil fuels, an important lesson to remember that technological progress happens in many areas. It happens in solar and wind power, but it also happens in finding, developing, and extracting fossil fuels. examples include the discovery of how to drill in ever-deeper water, as well as the more recent developments in getting oil and gas from tar sands and from hydraulic fracturing, As the authors write: \”It is an empirical regularity that, for both oil and

natural gas at any point in the last 30 years, the world has 50 years of reserves in the

ground. The corollary, obviously, is that we discover new reserves, each year, roughly

equal to that year’s consumption.\” Here\’s a figure showing the growth proven reserves of oil and gas reserves over time.

\”Proven reserves\” is a specific term referring to reserves that are available at (more-or-less) current prices, and given current levels of technology. Geologists also estimate fossil fuel \”resources,\” which are the quantities of fossil fuels known to exist, but not economically viable–yet. The known resources are maybe 3-4 times the size of the \”proven reserves. And then there are enormous other fossil fuel resources, like oil shale and methane hydrates, which are not currently counted as either reserves or resources, but technological developments over time could bring them into the market as well. As Covert, Greenstone, and Knittel write: \”If the past 35 years is any guide, not only should we not expect to run out of fossil fuels any time soon, we should not expect to have less fossil fuels in the future than we do now. In short, the world is likely to be awash in fossil fuels for decades

and perhaps even centuries to come.\”

When thinking about non-carbon technologies, it would take a book-length manuscript to go through all the possible developments. The authors thus focus on a few key points. Global demand for energy seems certain to rise dramatically in the decades ahead with overall economic development in today\’s low-income and emerging economies. The question about non-carbon energy sources is not whether they will expand (spoiler alert: they will expand), but whether they will expand so quickly and dramatically that they undercut fossil fuels in a wide array of uses. This outcome may be desirable, but that doesn\’t make it likely or even possible. As the authors write:

[T]he International Energy Administration Agency (2015) projects that fossil fuels will account for 79 percent of total energy supply in 2040 under the current, business-as-usual policies, which already takes into account some rise in these alternative noncarbon energy production technologies. In the medium-run of the next few decades, none of these alternatives seem to have the potential based on their production costs (that is, without government policies to raise the costs of carbon emissions)

to reduce the use of fossil fuels dramatically below these projections.

The paper offers a few comments in passing about carbon capture technology, nuclear power, and hydro power, but the main focus is on solar and wind technologies as alternative methods of generating electricity, and on whether developments in battery technology will make fully electric cars viable. Overall, Covert, Greenstone, and Knittel write:

Our conclusion is that in the absence of substantial greenhouse gas policies, the US and the global economy are unlikely to stop relying on fossil fuels as the primary source of energy. The physical supply of fossil fuels is highly unlikely to run out, especially if future technological change makes major new sources like oil shale and methane hydrates commercially viable. Alternative sources of clean energy like solar and wind power, which can be used both to generate electricity and to fuel electric vehicles, have seen substantial progress in reducing costs, but at least in the short- and middle-term, they are unlikely to play a major role in base-load electrical capacity or in replacing petroleum-fueled internal combustion engines. Thus, the current, business-as-usual combination of markets and policies doesn’t seem likely to diminish greenhouse gases on their own.